Instruction Pipelining-

Before you go through this article, make sure that you have gone through the previous article on Instruction Pipelining.

In instruction pipelining,

- A form of parallelism called as instruction level parallelism is implemented.

- Multiple instructions execute simultaneously.

- The efficiency of pipelined execution is more than that of non-pipelined execution.

Performance of Pipelined Execution-

The following parameters serve as criterion to estimate the performance of pipelined execution-

- Speed Up

- Efficiency

- Throughput

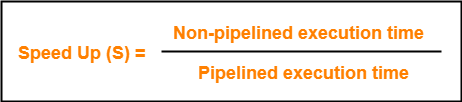

1. Speed Up-

It gives an idea of “how much faster” the pipelined execution is as compared to non-pipelined execution.

It is calculated as-

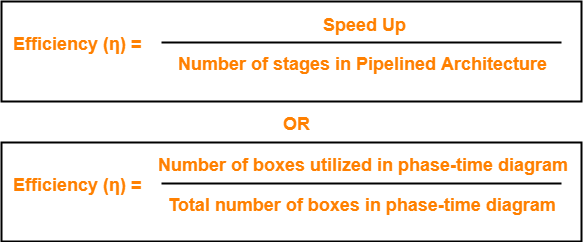

2. Efficiency-

The efficiency of pipelined execution is calculated as-

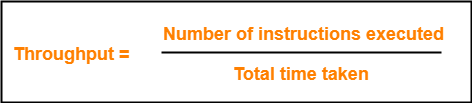

3. Throughput-

Throughput is defined as number of instructions executed per unit time.

It is calculated as-

Calculation of Important Parameters-

Let us learn how to calculate certain important parameters of pipelined architecture.

Consider-

- A pipelined architecture consisting of k-stage pipeline

- Total number of instructions to be executed = n

Point-01: Calculating Cycle Time-

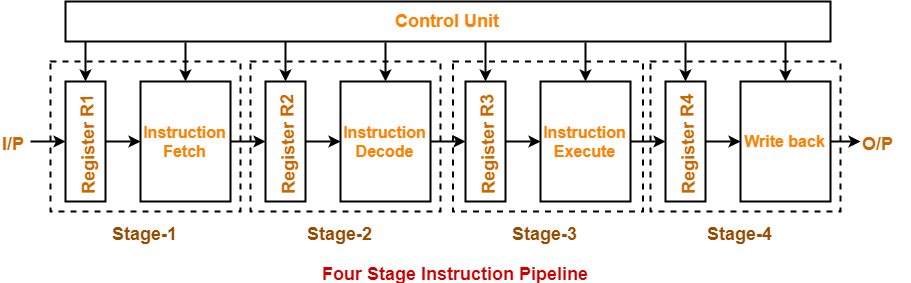

In pipelined architecture,

- There is a global clock that synchronizes the working of all the stages.

- Frequency of the clock is set such that all the stages are synchronized.

- At the beginning of each clock cycle, each stage reads the data from its register and process it.

- Cycle time is the value of one clock cycle.

There are two cases possible-

Case-01: All the stages offer same delay-

If all the stages offer same delay, then-

Cycle time = Delay offered by one stage including the delay due to its register

Case-02: All the stages do not offer same delay-

If all the stages do not offer same delay, then-

Cycle time = Maximum delay offered by any stage including the delay due to its register

Point-02: Calculating Frequency Of Clock-

Frequency of the clock (f) = 1 / Cycle time

Point-03: Calculating Non-Pipelined Execution Time-

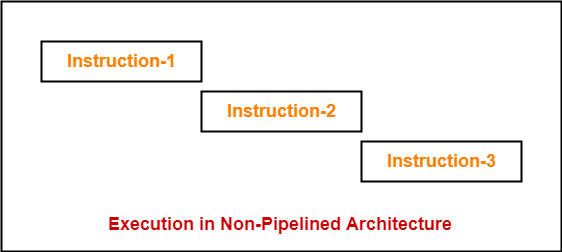

In non-pipelined architecture,

- The instructions execute one after the other.

- The execution of a new instruction begins only after the previous instruction has executed completely.

- So, number of clock cycles taken by each instruction = k clock cycles

Thus,

Non-pipelined execution time

= Total number of instructions x Time taken to execute one instruction

= n x k clock cycles

Point-04: Calculating Pipelined Execution Time-

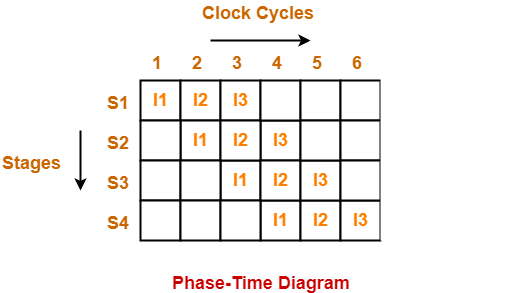

In pipelined architecture,

- Multiple instructions execute parallely.

- Number of clock cycles taken by the first instruction = k clock cycles

- After first instruction has completely executed, one instruction comes out per clock cycle.

- So, number of clock cycles taken by each remaining instruction = 1 clock cycle

Thus,

Pipelined execution time

= Time taken to execute first instruction + Time taken to execute remaining instructions

= 1 x k clock cycles + (n-1) x 1 clock cycle

= (k + n – 1) clock cycles

Point-04: Calculating Speed Up-

Speed up

= Non-pipelined execution time / Pipelined execution time

= n x k clock cycles / (k + n – 1) clock cycles

= n x k / (k + n – 1)

= n x k / n + (k – 1)

= k / { 1 + (k – 1)/n }

- For very large number of instructions, n→∞. Thus, speed up = k.

- Practically, total number of instructions never tend to infinity.

- Therefore, speed up is always less than number of stages in pipeline.

Important Notes-

Note-01:

- The aim of pipelined architecture is to execute one complete instruction in one clock cycle.

- In other words, the aim of pipelining is to maintain CPI ≅ 1.

- Practically, it is not possible to achieve CPI ≅ 1 due to delays that get introduced due to registers.

- Ideally, a pipelined architecture executes one complete instruction per clock cycle (CPI=1).

Note-02:

- The maximum speed up that can be achieved is always equal to the number of stages.

- This is achieved when efficiency becomes 100%.

- Practically, efficiency is always less than 100%.

- Therefore speed up is always less than number of stages in pipelined architecture.

Note-03:

Under ideal conditions,

- One complete instruction is executed per clock cycle i.e. CPI = 1.

- Speed up = Number of stages in pipelined architecture

Note-04:

- Experiments show that 5 stage pipelined processor gives the best performance.

Note-05:

In case only one instruction has to be executed, then-

- Non-pipelined execution gives better performance than pipelined execution.

- This is because delays are introduced due to registers in pipelined architecture.

- Thus, time taken to execute one instruction in non-pipelined architecture is less.

Note-06:

High efficiency of pipelined processor is achieved when-

- All the stages are of equal duration.

- There are no conditional branch instructions.

- There are no interrupts.

- There are no register and memory conflicts.

Performance degrades in absence of these conditions.

To gain better understanding about Pipelining in Computer Architecture,

Next Article- Practice Problems On Pipelining

Get more notes and other study material of Computer Organization and Architecture.

Watch video lectures by visiting our YouTube channel LearnVidFun.