Current Research Trends in Machine Learning in Education-

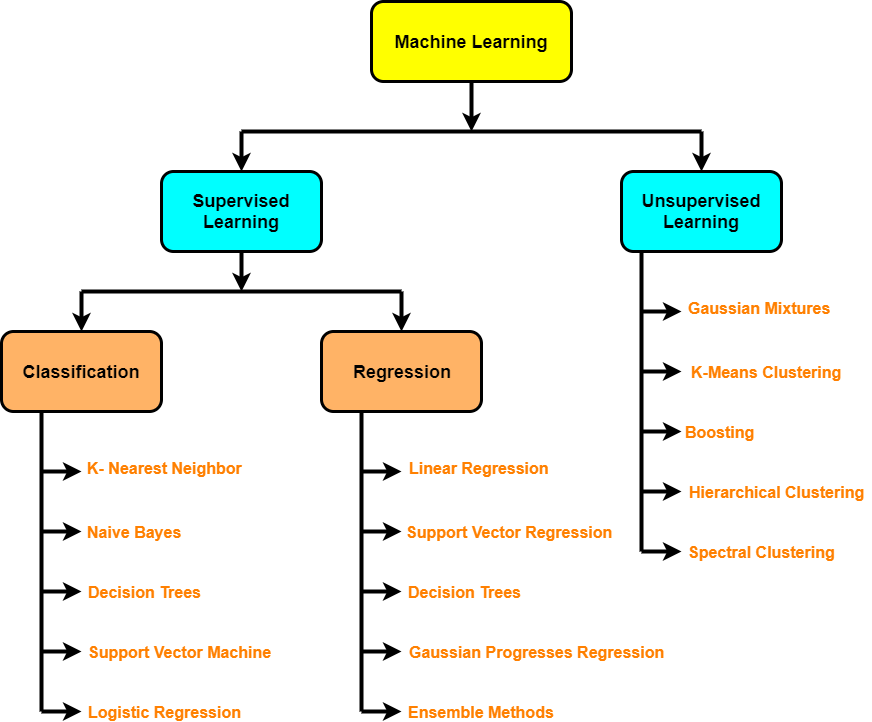

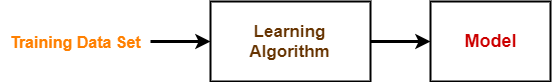

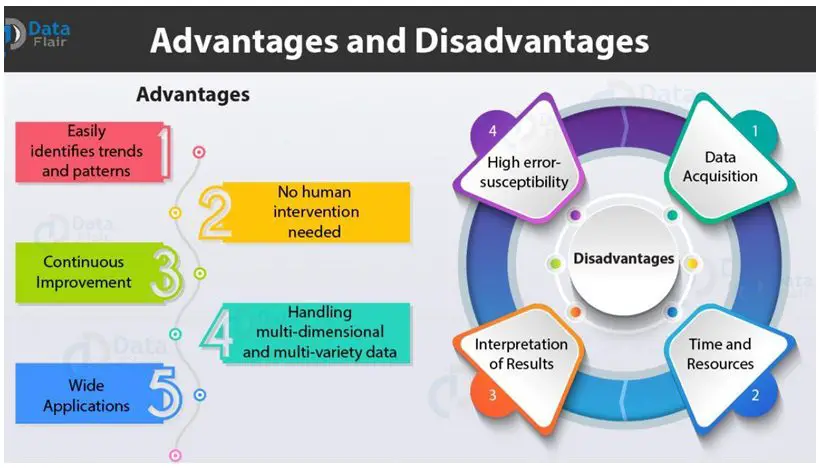

Technology plays a crucial role in the lives of everyone. Almost all the functions are automated in every sphere of human activity. It’s done to simplify all the processes, make them run faster, easier, and more productively. Although many people oppose the implementation of technology, its advantages are undeniable. One of the common branches of technology present in education is machine learning. It’s a specific study of algorithms and statistical models that are used by personal computers, laptops, and similar devices. Thanks to those algorithms and statistical models, technological devices can fulfill different tasks and objectives.

Many students prefer to become specialists in this industry, but the learning process is pretty complex for them. Some of them use the assistance of a Cheap Research Paper Writing Service to resolve their impediments by means of professional academic writers. Nonetheless, it’s another question that will be discussed in future posts. In the meanwhile, we’d like to highlight current research trends in machine learning in education and clarify their value for the educational sector.

The Main Developers-

First, we want to outline the main participants in machine learning who will actively collaborate with the system of education. Their devices and applications will determine the effectiveness of the learning process for the next decade or more.

The main role in machine learning for education will play:

- IBM

- Microsoft

- Amazon

- Quantum Adaptive Learning

- DreamBox Learning

- Pearson

- Bridge-U and others

Adoption Of Cloud Technology-

One of the major trends of learning in education is the implementation of Cloud-Based Technology The most important benefit of this kind of technology is to save expenses for educational institutions. It has segmented the entire process of education and simplified it. Students can easily and quickly access the required information in any discipline, fulfill the necessary tasks, and save the results online. This information will never be lost unless users decide to delete it completely.

Digital Assistants-

Although the role of teachers is important, the usefulness of digital assistants offers multiple dividends for all participants of the educational process. Teachers and professors are human, and they cannot devote all their time to their students. In the meanwhile, students frequently have questions when they are already home, and it’s quite late. Thus, a digital assistant comes in handy.

This trend claims that students can resolve their academic complications at any suitable time. Digital assistants are intelligent AI-based (artificial intelligence) machines. They have the knowledge and experience of real educators and can answer most of the questions. For example, a student doesn’t know how to complete an expository essay. The AI-instructor will provide the necessary tips and recommendations, will give resourceful information websites, and something of the kind. Students may receive educational feedback day and night!

Studying Education Market-

Machine learning may propose future development in the educational sector. The technology uses specific algorithms that gather information about education, the objectives of the country’s policy, and the needs of its participants (learners and educators). Afterward, it analyzed this information to propose Clear Insights. It may suggest effective solutions to the current problems and offer new methodologies in the future. For example, it will give predictions for education market dynamics, competition in the field, regional analysis, etc.

Virtual & Augmented Reality-

This research trend is popular and prospective for at least 5 years. The tendency will not decrease in the upcoming decades and its productivity will only increase. This unique technology sufficiently enhances the productivity of the learning process because it ensures a life-like experience. Students can put on special glasses and ear heads to see and listen to a journey into ancient times, see how a manufacturing process runs, or how the surgery is carried out. This practice enhances the interest of students in the educational process.

Online Assessments-

Another crucial trend is important for teachers and professors. It’s an online assessment of students. It’s no secret that educators are overloaded with different responsibilities and duties. They frequently don’t have enough time for academic consultations, help for their students, as well as their private life. Technology can resolve this serious drawback.

Many colleges and universities already use online evaluation of learning results. Smart AI checks and analyzes the results of every lesson, lecture, and test. It summarizes grades instead of educators. It likewise plans future events, creates reasonable schedules, and so on. Thus, teachers and professors can partially get rid of this burden.

Adoption Of Machine Learning-

We want to pay attention to the process of implementation of machine learning in the educational sector. Even if the technology is beneficial and highly efficacious, the wrong use of it will destroy everything. This information will be helpful for educators and educational institutions.

Make allowances for the following recommendations:

- Set clear objectives- Before an institution implements any technological devices and programs, it’s supposed to clarify objectives for itself to understand how to use technology effectively.

- Consider finances- The administrations of every school, college, and university should assess their budgets. Technology has its price and every educational institution should be confident that it can afford the implementation of new technology.

- Stick to realism- The use of technology should be realistic. If the institution doesn’t have enough qualified experts or funds to implement machine learning, it shouldn’t utilize it beforehand.

- Educate teaching staff- Not all teachers and professors know how to work with new technology. Therefore, every institution is obliged to provide training courses to educate its teaching staff.

If educational institutions take into account these recommendations, they will definitely enjoy success. They will avoid possible delays and complications. Machine learning trends will serve effectively for educators and learners.

It goes beyond all doubts that machine learning is an important industry, and it’s used by education. Thanks to understanding the way certain applications work, educators and learners can implement them to boost the productivity of the learning process. The trends highlighted in our informative article determine the future course of education.